The Singularity Doesn't Need Paul Allen to Understand

Lincoln Cannon

13 October 2011 (updated 26 May 2026)

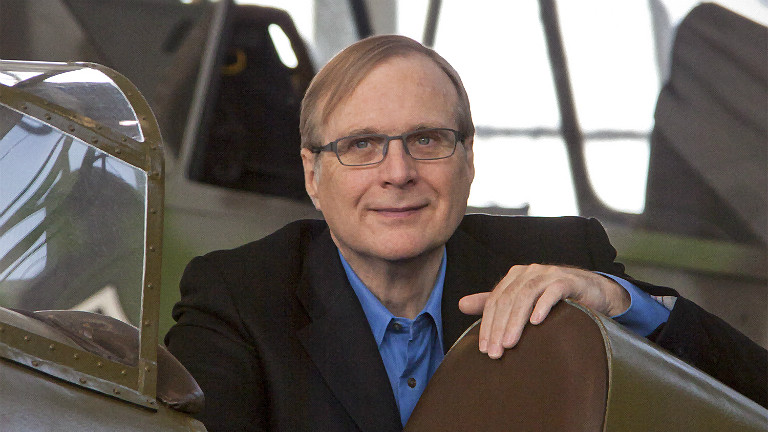

Like others before him, Paul Allen says that the “singularity is not near.” We will not anytime soon engineer computers superior to human brains, he argues. His argument is based on the observation that human biology, neurology and cognition are highly complex, and he concludes we will need to understand this complexity before we can match or exceed it with our computers. Also like others before him, Paul is probably wrong because the Singularity does not require understanding.

Scanning technology permits us to model and simulate systems that we do not understand. Of particular importance to the subject at hand, and even generally important given that human brains are the most complex objects known to humanity, brain scanning technology is increasing in resolution at an exponential rate. Assuming the trend continues, we will be able to model and simulate human brains sometime this century, unless current expert assessments of the essential degree of complexity of the human brain are incorrect by many orders of magnitude.

Furthermore, assuming it is the pattern rather than the substrate of the human brain that leads to cognition, then the word “simulation” would not be appropriate once our scanned models attain a sufficient degree of detail, because they will be cognizing. Yes. Unless trends in scanning technology cease, and unless there’s something magical about carbon, sufficiently detailed scanned models will cognize, even if we don’t understand how they do it any more than we understand how we do it.

Paul references scanning technology, claiming that it will be insufficient because it will need to model not merely brain state, but also brain function. In other words, it must be both spatially and temporally detailed. He’s right, of course, that function is an essential aspect of a detailed model of the human brain. However, he offers no reason to believe that the exponentially advancing capabilities of scanning technology will be limited to spatial details. Is he up to speed on advances in functional magnetic resonance imaging? If so, what am I missing?

I don’t know whether there will be a technological Singularity, and I don’t even think it would necessarily be a good thing (depending on the form it takes). However, it’s a mistake to bet against it simply because we think it will take humans a long time to understand our brains. We don’t have to understand them. We need only scan and model them, and our computers are already helping us do that, even when human scientists cannot.

Institute for Ethics and Emerging Technologies later republished this article.